What is Chain of Thought?

Most chat interfaces hide the model’s reasoning. You send a message, the model processes it internally, and you get an answer — with no visibility into how it got there. For simple questions that’s fine. For complex ones — multi-step math, debugging logic, structured analysis — the answer alone isn’t always enough.

Chain of Thought (CoT) is a prompting technique that makes the reasoning process explicit. Instead of jumping straight to a conclusion, the model works through the problem step by step, producing a visible reasoning trace before the final response.

Without CoT: With CoT:

User: "Is 97 prime?" User: "Is 97 prime?"

Model: "Yes." Model: [thinking]

97 ÷ 2 = 48.5 (not integer)

97 ÷ 3 = 32.3 (not integer)

97 ÷ 5 = 19.4 (not integer)

97 ÷ 7 = 13.8 (not integer)

√97 ≈ 9.8, checked all primes ≤ 9

[/thinking]

Yes, 97 is prime.The thinking trace is visible in the UI but visually separated from the final answer, so you get the transparency without it getting in the way.

The Project

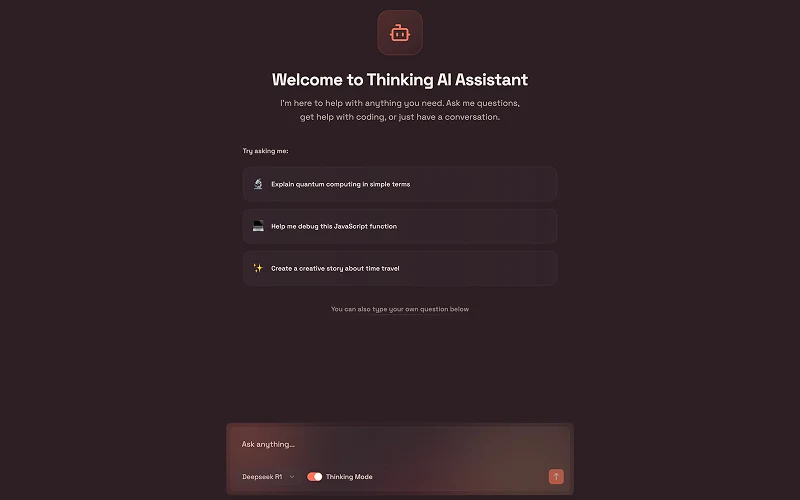

Reasoning Assistant is a Next.js chat interface built on top of Groq’s inference API. Groq provides fast inference for reasoning-capable open models, making the streaming latency low enough to feel responsive even when the thinking trace is long.

Users can switch between models and toggle thinking mode on or off per conversation:

| Model | Provider | Thinking |

|---|---|---|

| DeepSeek R1 | Groq | Yes |

| Qwen3 32B | Groq | Yes |

| Gemma2 9B | Groq | No |

Features

Toggleable thinking mode — reasoning traces are opt-in. Turn it on for complex questions, off when you just want a quick answer.

Real-time streaming — both the thinking trace and the final response stream token by token via the Groq SDK’s streaming API, so there’s no waiting for a complete response before anything appears.

Markdown rendering — responses render with full markdown support including syntax-highlighted code blocks, useful for programming and technical questions.

Model switching — swap between models mid-conversation without losing the chat history.

Tech Stack

| Layer | Tech |

|---|---|

| Framework | Next.js 15 (App Router), React 19, TypeScript |

| Styling | Tailwind CSS v4, Radix UI |

| AI inference | Groq SDK |

| Markdown | React Markdown with syntax highlighting |

| Icons | Lucide React |

Setup

git clone https://github.com/akdevv/reasoning-assistant.git

cd reasoning-assistant

bun installAdd your Groq API key to .env.local:

GROQ_API_KEY=your_groq_api_key_herebun devKey Decisions

Why Groq instead of calling OpenAI or Anthropic directly? Groq’s hardware inference is significantly faster than standard API endpoints for the models it supports. For a chat interface where you’re streaming a long reasoning trace, that speed difference is noticeable in feel. DeepSeek R1 and Qwen3 are also free-tier on Groq, so the demo works without any paid API key.

Why make thinking mode a toggle? A visible reasoning trace is genuinely useful for some prompts and noise for others. Forcing it on for every message would make simple conversations tedious. Letting users opt in per-session respects the fact that CoT is a tool, not a default.

Why keep the thinking trace visually distinct from the answer? The reasoning trace can be long — sometimes longer than the answer itself. Mixing it inline with the response would make the output hard to read. Separating it visually (collapsible or dimmed) lets you reference the thinking without it dominating the conversation view.

Outcome

A minimal but complete reasoning-aware chat interface. The project explores how to present model transparency — making the AI’s thinking process visible — without overwhelming the user or adding friction to everyday use.